Fully Automated Luxury Socialism: The Case for a New Public Sector

Fully Automated Luxury Socialism: The Case for a New Public Sector

With ever more job-killing robots on the horizon, it’s time to demand a new public sphere: one that guarantees not just jobs but leisure too.

There is a little-remembered scene in Joan Didion’s “Slouching Towards Bethlehem,” published fifty years ago last August, where the writer, sleuthing through the menagerie of San Francisco’s counterculture, finds herself in the fleeting company of semi-professional political organizers. Didion, after talking to the groupies at a Grateful Dead rehearsal in Sausalito, feeding hamburgers to a runaway teen couple, and listening to hippies romanticize living “organically” off the land, eating “roots and things,” ends up at the apartment of Arthur Lisch, who is on the payroll of the American Friends Service Committee. When she arrives, Lisch is phoning VISTA (Volunteers in Service to America), one of the agencies created by the Economic Opportunity Act of 1964, to secure government funding for his group and their activities. The influx of teenage homelessness, he says, is creating a social crisis verging on riot. As he coaxes and counsels, a hippie sits in their living room in a psychedelic daze. Mrs. Lisch feeds their children while two Diggers cut up pounds of meat for “the daily Digger feed in the park.” But Lisch, it seems, is driven by a vision beyond bohemia. He “does not seem to notice any of this,” Didion writes. “He just keeps talking about cybernated societies and the guaranteed annual wage and riot on the Street, unless.”

The scene is worth remembering, not only for its portrayal of the diligent and tenuous work of movement building, but for its vision of the future. Today cybernation and the guaranteed annual wage—rebranded as the universal basic income—are having their renaissance. In March 2016 General Motors bought a self-driving-vehicle startup for $1 billion; in October, Qualcomm spent $47 billion on an automobile-chip company, one of several multibillion dollar deals over the past couple of years that saw semiconductor and software companies absorb leading firms in the auto-parts market. “This is almost something that should be a mission of the human species, instead of a company,” says one software executive of driverless cars. In the Harvard Business Review, MIT techno-evangelists Andrew McAfee and Erik Brynjolfsson coo over the falling price of “industrial-grade ML [machine learning] deployments,” in everything from audio and visual recognition to accounting and financial services.

To be a player in tech today means selling policy as well as product. And so the public relations and political lobbying efforts in Silicon Valley have warmed up to what once seemed like a radical idea: the universal basic income. “There is a pretty good chance we end up with a universal basic income . . . due to automation,” Elon Musk told CNBC in November 2016. “I am not sure what else one would do.” In a widely circulated speech to Harvard graduates last May, Mark Zuckerberg joined the chorus, endorsing a universal cash-transfer program financed by a sovereign wealth fund.

As during the 1960s, rapid technological change is again stoking fears of a secular shift toward higher unemployment and a lower rate of labor-force participation. Today this debate turns primarily on impending dislocations in the service sector, led by the automation of private freight, transportation, and retail.

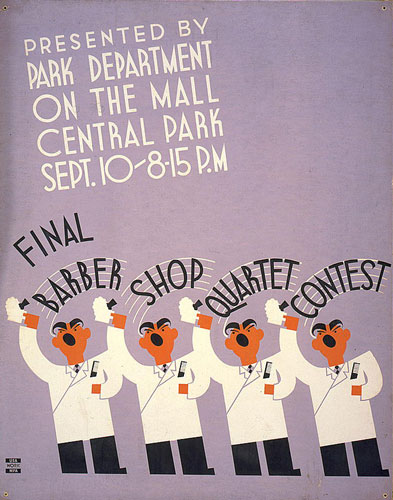

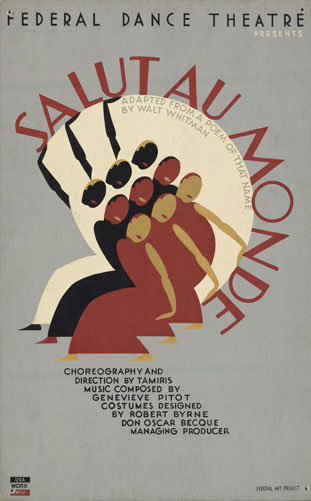

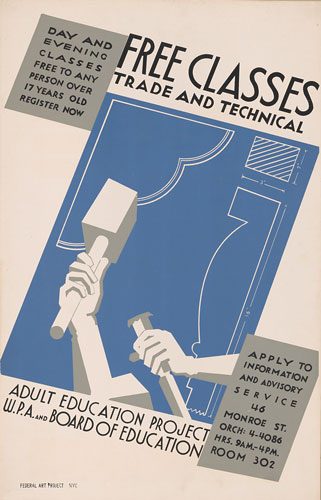

But those who frame the question of technological unemployment in this way—as a problem of lost income and private security for individuals—have lost a key insight of the last period of automation alarmism. At the peak of the postwar boom, forward-thinking intellectuals interpreted the threat of joblessness as a question of how Americans would rediscover meaningful social purpose outside the private workplace. Rather than purely a problem of adequate market income, technological unemployment became a problem of how to help communities organize their leisure time. For the coalition of labor intellectuals and statist liberals forged during the New Deal, expanded cash transfer schemes were not the keystone of any future civilization but mere adjuncts to the dreamed expansion of government-financed community-service jobs. This idea was once commonsensical; for Lisch and for Didion, though they came from very different political backgrounds, it didn’t even bear mentioning. Income supports were secondary to the government jobs at the heart of the bid for a thriving public sphere: for the bettering of schools and the hiring of teachers; for the expanded construction of public housing, gymnasiums, swimming pools, and public parks; for the provision of cleaner air and water; and for improving and expanding mass public transit.

Public services, it was once thought, would be needed for citizens to make free and full use of their expanded leisure time. Cybernated industry made it imperative for government to bring this future into existence.

The word “cybernation” was coined by Donald N. Michael, a researcher whose résumé spanned from NASA to the Peace Research Institute, a precursor to the antiwar Institute for Policy Studies. In 1962, Michael wrote a pamphlet for the Fund for the Republic, a Ford Foundation offshoot, which extended to automation the idea of “cybernetics,” or the study of systems directed by information networks. Titled Cybernation: The Silent Conquest, Michael’s report described a form of automated business planning that had been progressively reducing industry demand for labor. Already draining the economy of jobs, the changing structures of business were filling the ranks of the idle and restless unemployed.

Fifty-five years later, nearly every industry has been “cybernated” in the way Michael used the word. Today, production orders and retail workloads are calculated and scheduled nearly autonomously by the use of point-of-sales software that networks retail stores with warehouses and factories. Delivery fleets are coordinated according to algorithmically selected driving routes. Investment portfolios are composed and recomposed according to automated trading software. Supply chains are planned according to macroeconomic models forecasting future purchases, profits, and employment.

Such changes in industrial organization were once a cause for alarm. Cybernation meant displacement. Michael wrote that many of those in low-paid jobs made redundant by technology would be unable or unwilling to use the new “leisure” time created by businesses’ declining demand for workers. Too many had been taught over generations to value employment, or to aspire to a quality of living unavailable through welfare payments. They were “used to equating work and security.” As long as they aspired to market consumption, people would meet their free time with desperate frustration.

Displacement meant looming upheaval. Those laid off, Michael thought, would reject the “low-cost time-fillers between unemployment checks” such as “watching television.” Others, forced onto shorter hours rather than unemployment, would work multiple jobs. “General insecurity” would encourage the spread of “‘moonlighting’ rather than the use of free time for recreation,” and the quality of skilled labor would deteriorate. Low-wage service and manufacturing jobs would be eliminated and middle-management positions deskilled. Nor would cybernation bypass the service sector; “If people cost more than machines . . . there will be growing incentives to replace them.” And people would cost more, Michael argued, as they conformed to the time-honored pattern of joining labor unions to raise their comparatively low wages.

As productivity climbed and consumption ebbed, the economy threatened to enter a perpetual state of underemployment. Such predictions had been made for nearly a century, and only rose in pitch through the 1950s. As Edwin Nourse, the chairman of Harry Truman’s Council of Economic Advisors, told the Joint Economic Committee, rising productivity and prices in the core industries, paired with falling consumer income, indicated “a critical situation which might, hopefully, be described as pregnant or, apprehensively, as explosive.”

Today many on the left as well as in Silicon Valley envision that an income guarantee, by providing a minimum standard of living, will help to ameliorate the grotesque inequalities of twenty-first-century global capitalism. Michael never mentioned the idea. “An obvious solution,” he instead wrote in 1962, “is a public works program”—one modeled on the Works Progress Administration and the Public Works Administration, the largest civilian public works programs in U.S. history.

But this wasn’t the only daring proposal floating around in response to the automation crisis. In March 1964, as the War on Poverty was taking shape, a group calling itself the Ad Hoc Committee on the Triple Revolution wrote an open letter to President Johnson urging not just a precipitous expansion of government payrolls for public works but “an unqualified right to an income.” First proposed in the convention halls of the molten industrial union movement of the late 1930s, the idea began as a form of private unemployment insurance to combat the business cycle. But it was also a wedge for winning secular changes in the labor market; it would be paired, as autoworkers’ union president Walter Reuther explained it in the 1950s, with a shorter work week.

The Ad Hoc Committee ran with the radical version of this idea. The committee took its name from the “three separate and mutually reinforcing revolutions” then underway—the “cybernation revolution,” which had distorted the labor market and already severed work from income; the threat of mutually assured destruction, which practically removed conventional war from foreign policy; and the rise of civil (and, more broadly, human) rights, which was sweeping away calcified inequalities such as the Jim Crow system of the American South. A who’s-who of postwar left-liberals, led by heterodox economist Robert Theobald and firmly inside the Democratic Party, the members of the Ad Hoc Committee included veterans of the trade-union left (A.J. Muste, Bayard Rustin, and Michael Harrington); the New York intellectuals Dwight Macdonald and Dissent editor Irving Howe; the old socialist Norman Thomas and the New Leftists Tom Hayden and Todd Gitlin; the technocratic economists Gunnar Myrdal and Robert Heilbroner; and Stewart Meacham, a Quaker leader in the peace group to which Arthur Lisch belonged. The group was rounded out with a retired brigadier general, a corporate executive, a handful of university administrators, and a few independent professionals. Many had been socialists in the 1930s; nearly all had supported Roosevelt and were calling on Johnson for a similar expansion of public services.

The day after they published their letter, the New York Times responded with a 1,000-word article on the front page. The headline read “Guaranteed Income Asked for All, Employed or Not.” Direct public employment was only mentioned on page twenty. Nevertheless, the bulk of the committee’s analysis and proposals were drawn right out of Donald Michael’s Cybernation report. As long as consumption depended on private employment, they argued, cybernated industries would continue to produce underemployment. Without government employment, the War on Poverty was “bound to fall short.” Jobs could be made to renovate public schools and expand public housing. Hundreds of thousands of new teachers were needed, and could be readily found among the ranks of those whom private industry was increasingly unable to meaningfully employ. Mass rapid transit would move people, public utilities would lower costs, and other “massive public works” such as “air pollution facilities and community recreation facilities” would raise living standards, create employment, and offer communities a greater variety of forms of leisure. For the elderly, disabled, or those uninterested in community life, it was assumed that a guaranteed income would ensure enough consumption to sustain private industry. But for the rest of the population, “new modes of constructive, rewarding and ennobling activity” would be made possible by expanded public services. Building these public systems would absorb untold man-hours made available by cybernation. They would “give hope to the dispossessed and those cast out by the economic system, and . . . provide a basis for the rallying of people.”

Government jobs were imperative because the social life of communities could not be sustained by private employment and impoverished leisure alone. As Michael had written, few Americans were enthusiastic about relying on “the creative use of leisure” for their incomes. As the “chief repository of creative, skilled manual talents,” some could turn to artisanship since “there may be a real if limited demand for hand-made articles” in “a nation living off mass-produced, automatically produced products.” But this group was unique. The dominant groups would be the unskilled thrown out of work on one side and, on the other, a class of “overworked professionals and executives”—the experts left to manage a cybernated society, who would “continue to be overburdened.” The transformation of the private workforce from participants in production to a passively consuming public “bounded closely on one side by the unemployed and on the other by a relatively well-to-do community to which it cannot hope to aspire,” boded an ominous and unpleasant future. “Boredom may drive these people to seek new leisure-time activities if they are provided and do not cost much,” he warned. “But boredom combined with other factors may also make for frustration and aggression and all the social and political problems these qualities imply.”

By 1964, labor-force participation had been falling continuously for eight years to a low of 58.7 percent. If trends continued, the Triple Revolution letter argued, blacks and the poor, those historically at the bottom of the labor market, would predominate among the ranks of the un- and under-employed. “Teenagers, especially ‘drop-outs’ and Negroes, are coming to realize that there is no place for them in the labor force but at the same time they are given no realistic alternative,” they wrote. “Left to the ordinary forces of the market [the future] will involve physical and psychological misery and perhaps political chaos.”

Did history vindicate these Cassandras of cybernation? In the short run, chaos did prevail on the American scene for a decade or more. Beneath the full employment economy created by the Vietnam War, American industrial centers were altered dramatically by technology and the emerging service sector. By the end of the summer of 1964, riots broke out in African-American working-class neighborhoods in New York City and Rochester. The Los Angeles neighborhood of Watts and San Francisco’s Hunters Point followed in 1965 and 1966. By the summer of 1967, when Didion arrived in Haight-Ashbury, civil unrest was wracking over 150 cities. If labor disputes involving more than 1,000 workers are included in this list of civil unrest, order cannot be said to have returned to American urban centers until the mid-1970s.

In the longer run, however, two trends departed from the predictions of generalized social crisis. The first was the reversal of the falling rate of labor-force participation. After bottoming out in 1963 at 59 percent, labor-force participation rose on tides first of war and then of feminism to a peak of 67 percent in the late 1990s. When the Ad Hoc Committee published its findings in 1964, the 59 percent labor-force participation rate represented 81 percent of men and 39 percent of women. When employment rates peaked in 2000, total workforce participation (67 percent) represented 75 percent of men and 60 percent of women; today the numbers are 69 percent of men and 57 percent of women. Manufacturing employment has failed to keep pace with population growth, falling from 27 to 9 percent of all jobs in the same fifty-year period; a growing service sector filled the gap.

The second trend is that these service jobs have by and large not been automated (in part, because face-to-face interaction still commands a premium in up-market retail and hospitality industries). For much of the service sector, the very cheapness of labor has incentivized its use. As federal collective-bargaining machinery steadily narrowed the scope of union activities, as import competition shuttered the most lucrative sources of union dues, and as the unions themselves failed to organize outside of their 1930s-era sectoral strongholds, wages failed to keep pace with total labor productivity. The weakness of the labor movement has eased pressure on employers to invest in labor-replacing equipment. Cashiers, retail salespeople, food-service workers, and office clerks together made up 35 percent of the workforce in 2015, and these payrolls are not eating into business profits. Though it took decades, low-wage private services have presented a way of sustaining a high level of employment.

So why has there been a revival of automation talk? For one, since 2000, the labor force participation rate has again been on the decline. It hit a low around 63 percent in 2014, where it has hovered ever since. Unless another cultural revolution results in a new influx of job seekers, the portion of the population relying on private labor income may continue to shrink.

The other reason is that today the design and manufacture of technologies to replace human services has become a leading growth industry in its own right. Machine-learning applications, for example, could make the call centers of the world a thing of the past, while the potential retail savings from online marketing and self-driving vehicles are enormous. Over the past four decades, labor income has failed to catch up with productivity growth, and still private industry would like to increase margins by cutting payroll.

These new industries will create new and different jobs, it is often argued. True, but there is increasing evidence that automation, while not contributing to joblessness, is a driving force of inequality. Economist Laura Tyson, who was the first chair of Bill Clinton’s Council of Economic Advisers, argues in a recent paper with Michael Spence that “network-based information and communication technology systems . . . by providing immediate access to and analysis of current data across the entire enterprise . . . increase the span of control of top managers and CEOs.” Her conclusions confirm the findings of other economists, such as former Labor Department official David Weil, who argue that outsourcing and subcontracting are management strategies to redistribute income upward within the workforce, and to shift income from wages to profits. With greater managerial efficiency comes higher manager pay—as Tyson points out, almost invariably at workers’ expense.

Leading New Dealers saw this coming, and several of those who lived into the 1960s continued to fret over the increasing divide between the extravagantly paid and the cheaply replaceable. They were swimming against the current: the question of the relative influence and power of organized groups was subsumed by econometric modeling in American economics departments during the 1950s. But those concerned by income inequality today would do well to revisit the New Dealers’ central insight: that policy proposals should seek not only to redistribute resources in the short term but to shift the balance of power between capital and labor. If income supports bolster labor’s bargaining power by providing individuals or groups of workers with an alternative to particular private employers, power and income may be redistributed within firms or industries. But as long as private employment without collective bargaining is the only path to the good life, an income guarantee will primarily serve to sustain underlying power relations. Absent some diffusion of capital ownership or expansion of public investment in community services, private investment will continue to reflect the power and influence of a very small section of the population. Capital markets have demonstrated this point repeatedly. The daily parade of almost offensively extravagant luxury products concocted in Silicon Valley and backed by venture-capital firms—recall the $400 Juicero—have made it clear that markets have no allegiance to the public interest. Many new firms are built on palpable inequalities, and depend on the growth of those inequalities for their own success.

What about government jobs, the new hope of the aging Old Left? Though the Ad Hoc Committee did not get their second New Deal, they wrote at a moment when the trend was toward a growing share of employment by government. Government jobs as a share of total employment rose from roughly 13 percent in 1948 to 17 percent in 1965, before peaking at 19 percent in 1975.

These are almost exclusively service jobs. But unlike those in the private sector, they cater to the public according to legislative statute by elected leaders, rather than the whims of a consumer base increasingly segregated by income and wealth.

After 1975, the trend toward a greater percentage of employment by government reversed. Public-sector employment fell to 17 percent in 1985, and dwindled to just below 16 percent by the end of the Bill Clinton administration. After a brief boost at the height of the last recession, government employment took another blow under the ascendant Tea Party and now the Trump administration. As of October 2017, 22.4 million of 147 million American workers were employed by federal, state, and local governments (including county schools), according to the Bureau of Labor Statistics. That’s about 15.2 percent, the lowest rate in sixty years.

Today, the labor-force participation rate has fallen again. We are living through another period of massive technological change. But unlike fifty years ago, there is no source of good jobs growing quickly enough to match the decline in manufacturing. And now middle-wage service jobs, from truck and cab drivers to hotel workers and journalists, are becoming the most vulnerable to technological displacement. Public spending—representing a little over a third of U.S. GDP since 2013—has been increasingly directed to contractors, which follow the private pattern concentrating income upward within each firm. “Gaining control of our future requires the conscious formation of the society we wish to have,” the Ad Hoc Committee on the Triple Revolution wrote in 1964. Rather than an ominous future of joblessness, the provisional answer to this question has been an economy of new types of service jobs. But what kind of services, and for whom, will the future provide?

Andrew Elrod is a teaching assistant and a graduate student in the history department at the University of California, Santa Barbara.