The Union Difference: Labor and American Inequality

The Union Difference: Labor and American Inequality

Under the New Deal order, unions not only sustained prosperity but ensured that it was shared. Since the 1970s, however, the attack on unions has reversed these gains. Today, both union membership and inequality stand roughly at 1920s levels.

This series is adapted from Growing Apart: A Political History of American Inequality, a resource developed for the Project on Inequality and the Common Good at the Institute for Policy Studies and inequality.org. It is presented in nine parts. The introduction laid out the basic dimensions of American inequality and examined some of the usual explanatory suspects. The following parts will develop a political explanation for American inequality, looking in turn at labor relations, the minimum wage and labor standards, job-based benefits, social policy, taxes, financialization, executive pay, and macroeconomic policy. Previous installments in this series can be found here.

The decline of the American labor movement is—for a number of reasons—a pretty good marker for the broader dismantling of the New Deal and, therefore, for the political roots of inequality in the United States. Union losses, as we shall see, account in no small part for rising inequality, especially for men and especially in the 1970s and ’80s. These losses, in turn, have shaped the political environment: midcentury unions, representing a third of the private workforce, fought and won battles over trade, workplace safety, social policy, and civil rights; with union membership at 6.7 percent in the private labor force (2013), those battles (let alone any victories) are growing rarer and rarer.

Union decline has also fed inequality because, in the American context, so much is at stake at the bargaining table. In settings where union coverage is even and expansive, and where workers (and employers) can count on a decent minimum wage and universal health and retirement benefits, the stakes of collective bargaining in the private sector are relatively low. In the United States, by contrast, economic security is shackled to private job-based benefits, and uneven patterns of union coverage have always made it harder to organize bottom-feeding regions and firms. Employer and political opposition, in this respect, is fierce not because the labor movement is strong, but because it has never been strong enough to to socialize job-based benefits or to take wages out of competition.

A Short History of American Labor Policy

The American labor movement began the twentieth century claiming coverage of about 10 percent of the labor force, almost all of it (under the mantle of the American Federation of Labor) confined to skilled crafts and trades. An acute labor shortage during World War I pushed that number up to nearly 20 percent by the war’s end, but then employers took the gloves off. The anti-union drives of the 1920s rolled back most of those gains, restoring a pattern of insecurity and low wages (cushioned slightly by employer paternalism).

The arrival of the Great Depression—and with it, unemployment rates pushing 25 percent—brought dramatic change. Workers, with little left to lose, launched a series of dramatic organizing drives—first, in response to economic woes, and then under the legal protection for collective bargaining promised by the National Recovery Act (1933-1935) and delivered by the National Labor Relations Act (1935).

Just as importantly, New Dealers and progressive business interests came to view the economic crisis, and its persistence, as a consequence of weak aggregate demand in an economy organized around low wages. The new labor relations compact, in this respect, was essentially a recovery strategy—a way of using union power (after the Supreme Court abandoned the New Deal’s brief flirtation with industrial policy) to put money in the pockets of workers (as consumers). This was the culmination of Henry Ford’s effort—floated to much fanfare in 1914 but abandoned soon after—of bridging the gap between mass production and mass consumption by “making 20,000 men prosperous and contented rather than following the plan of making a few slave-drivers in our establishment multi-millionaires.” The combination of democratic workplace institutions, a legal framework for collective bargaining, and dramatic organizing gains presented—in this sense—both a new challenge for employers and, at least for some of them, a way of solving the challenges they already faced.

There were stark limits to union power, which was concentrated in some sectors and in some regions, and slow (or reluctant) to extend its benefits to women and African Americans. And much of the local (community, shop-floor) democratic potential of the new labor movement was eroded by the deals struck during World War II and immediately after, which largely traded militancy for membership. But the aggregate gains were nevertheless impressive. Membership pushed to nearly 40 percent of the labor force, a threshold which positioned the labor movement as a “countervailing power”—not just at the bargaining table but in local, state, and national politics as well.

And it paid off. For the first three decades after World War II, into the early 1970s, both median compensation and labor productivity roughly doubled [click here for graphic]. Labor unions not only sustained prosperity but ensured that it was shared. And, besides bargaining power, they offered workers a collective voice in corporate politics and policy.

Over the next thirty years, however, labor’s bargaining power collapsed. The seeds for this were sown when labor’s power was near its peak. The Taft-Hartley Act (1947) outlawed secondary labor actions (such as boycotts, or the picketing by workers not involved in the dispute) and undermined union security in so-called “right to work” states—especially in the South, the rural Midwest, and the mountain West [see graphic below]. The Landrum-Griffith Act (1959) further constrained secondary labor actions and permitted non-members to vote in union elections—essentially inviting employers to hire scabs, and then count scab votes against the fate of the unions.

These legal and political blows hit labor hard, slowing union growth in “right to work” states and putting the threat of flight to those states on the bargaining table. The damage mounted as the manufacturing base of the labor movement began to erode. And labor continued to reel through the concessionary bargaining of the 1970s and ’80s—an era highlighted by the filibuster of labor law reform in 1978, the Reagan administration’s crushing of the PATCO strike, the Chrysler bailout (which set the template for “too big to fail” corporate rescues built around deep concessions by workers), and the passage of anti-worker trade deals with Mexico and China. Employers intensified and refined their union-busting tactics, and the National Labor Relations Board—by turning a blind eye at employer tactics, if not giving them a wink—did little to stand in the way.

American Labor and American Inequality

The last forty years have seen a steady decline in union membership and density—and in union bargaining power, within and across economic sectors. The impact of all of this on wage inequality is a complex question—shaped by skill, occupation, education, and demographics—but the bottom line is clear: there is a demonstrable wage premium for union workers, more pronounced for lesser skilled workers, and spilling over to the benefit of non-union workers as well. This is true across the economy, though a few industries and cities highlight this most dramatically. Real wages in meatpacking, for example, fell by almost half from 1960 to 2000 (an era in which union power in the sector largely evaporated); in 1970 packinghouse wages were about 20 percent higher than the average manufacturing wage, but in 2002 they were 20 percent lower.

The wage effect alone underestimates the union contribution to shared prosperity. Unions not only raised the wage floor but also lowered the ceiling: union bargaining power has been shown to moderate the compensation of executives at unionized firms. Unions at midcentury also exerted considerable political clout, sustaining other political and economic choices (minimum wage, job-based health benefits, social security, high marginal tax rates) that dampened inequality. Union wages sustained stable demand for goods and services—understood as the linchpin for economic growth since the 1930s. Quite simply, unions made and sustained the middle class.

The net result is telling. Early in the century, when union membership stood at barely 10 percent of the labor force, inequality was stark: the share of national income going to the richest 10 percent of Americans stood at nearly 40 percent. This gap widened in the 1920s. But after the New Deal granted workers basic collective bargaining rights and union membership grew dramatically, an equally dramatic decline in income inequality followed. This yielded an era of broadly shared prosperity, running from the 1940s into the 1970s. Since then, the attack on unions—in the workplace, in the courts, and in public policy—and corresponding drop in union membership has been matched by an increase in income inequality. Both, in fact, have returned roughly to 1920s levels.

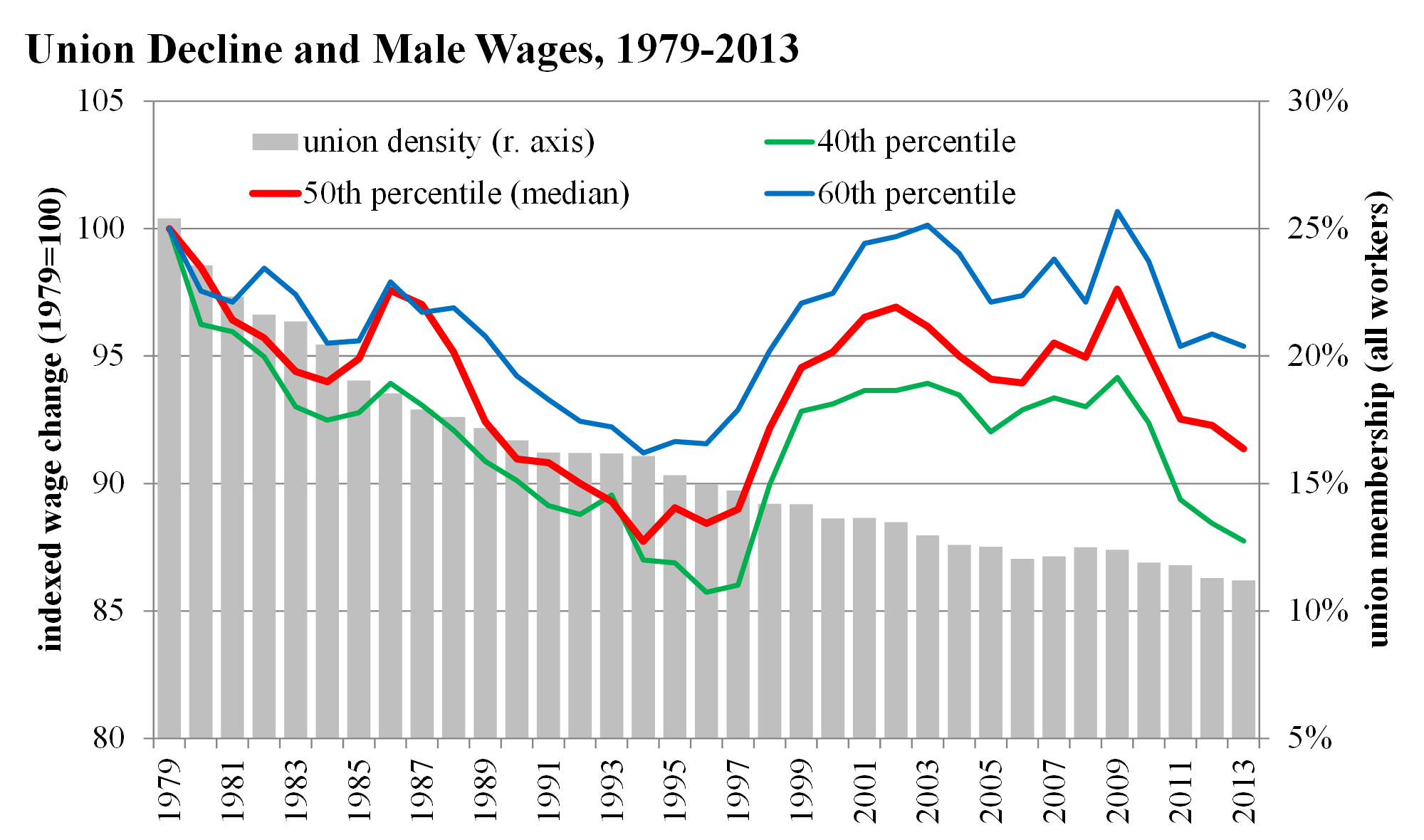

By most estimates, declining unionization accounted for about a third of the increase in inequality in the 1980s and 1990s. It has played a lesser role since then as the decline in union density has slowed (or bottomed out) and inequality—in an era of financial deregulation—has grown, more markedly at the top of the income scale. We can see this play out starkly in the decline of median male wages (see figure below), which closely tracked the collapse of union density from the late 1970s into the late 1990s. Indeed union losses account for virtually all of the growing gap between blue collar male workers and white collar male workers from 1978 to 1988, and for more than three quarters of that gap over the last generation (1978-2011).

Rates of union coverage and decline vary widely by state [click here for graphic], a pattern rooted in different trajectories of economic development and differences in state labor law (for private and public sector workers). In turn, the relationship between union coverage and inequality varies widely by state [see graphic below]: in 1979, union stalwarts in the Northeast and Rust-Belt states combine high rates of union coverage and relatively low rates of inequality, while just the opposite holds true for the southern “right to work” states. In a large swath of states—including the upper Midwest, the mountain West, and the less urban industrialized states of the Northeast—declining union membership since 1989 has translated to steeper inequality. And not surprisingly, as state-level unionization rates have fallen, rates of working poverty have grown accordingly.

American Labor in International Perspective

It is hard to offer clean comparison with our international peers because union density and collective bargaining mean different things in different national contexts. But the gap between the United States and its peers is pretty clear. Unionism has a peculiar trajectory in the United States [click here for graphic]. Union density in the U.S. peaked earlier and at a much lower level than it did in most other countries. And peers such as Canada—in which early growth resembled that of the U.S.—have managed to sustain those gains.

Across the OECD, there is a clear and compelling relationship between falling union density and rising inequality—in which the character and timing of that inequality (in the U.S. and elsewhere) maps closely to the role of collective bargaining in setting wages. In 1980, collective bargaining coverage in the U.S., at 26 percent, was near the bottom of the OECD list; between 1980 and 2000, this fell by almost half. In both eras covered by the graphic below (mid-1980s and mid-2000s), the United States is an outlier on both inequality (measured here as the ratio between the 90th percentile wage and the 10th percentile wage) and union coverage.

For a generation after World War II, the economy and the wages of working Americans grew together—a clear and direct reflection of the bargaining power wielded by workers and their unions. From the early 1970s on, however, union strength fell—and with it the shared prosperity that it had helped to sustain. Labor productivity has almost doubled since 1973, but the median wage has grown only 4 percent. The share of national income going to wages and salaries has slipped, while the share going to corporate profits has risen. Inequality has widened, most dramatically for those who—at an earlier point in our history, or in any other democratic and industrialized setting—would benefit the most from collective bargaining.

How does this translate beyond the paystub? To take one example, union losses also undermine health coverage, health security, and health outcomes, since the US relies so heavily on job-based social benefits. More broadly, the decline of both public and private sector unions means the decline of one of the most potent political forces of the last century, and along with it, support for a wide range of public policies that might sustain working families and check corporate power.

Colin Gordon is a professor of history at the University of Iowa. He writes widely on the history of American public policy and is the author, most recently, of Growing Apart: A Political History of American Inequality.