The Perils of Private Welfare: Job-Based Benefits and American Inequality

The Perils of Private Welfare: Job-Based Benefits and American Inequality

American inequality is driven not just by the uneven distribution of wages, but also by the uneven distribution of job-based benefits. More than any other country, the United States relies on private employment and private bargaining to deliver basic social benefits—including health coverage, retirement security, and paid leave. The results—on any basic measure of economic security—have been dismal.

This series is adapted from Growing Apart: A Political History of American Inequality, a resource developed for the Project on Inequality and the Common Good at the Institute for Policy Studies and inequality.org. It is presented in nine parts. The introduction laid out the basic dimensions of American inequality and examined some of the usual explanatory suspects. The political explanation for American inequality is developed through chapters looking in turn at labor relations, the minimum wage and labor standards, job-based benefits, social policy, taxes, financialization, executive pay, and macroeconomic policy. Previous installments in this series can be found here.

American inequality is driven not just by the uneven distribution of wages, but also by the uneven distribution of job-based benefits. More than any other country, the United States relies on private employment and private bargaining to deliver basic social benefits—including health coverage, retirement security, and paid leave. The results—on any basic measure of economic security—have been dismal.

Reliance on private benefits made some sense in a mid-century economy organized around lifetime “family wage” employment in large and stable firms. But even under these circumstances, benefits bypassed many workers. Their coverage was always uncertain (loss of a job meant loss of benefits) and often capricious (consider the health and pension plans that routinely evaporate in corporate restructuring). Good benefits followed good jobs, widening the gap between low-wage workers and everyone else. The expectation of private coverage undercut public programs—which were often structured as ways of supplementing job-based plans or mopping up around their edges. And, across the last generation, the logic of delivering social policy via private employment unraveled with the economy on which it was based.

A Short History of Job-Based Benefits

The growth of job-based benefits proceeded fitfully in the first half of the twentieth century; given widespread anxieties about competitive disadvantage, only a few firms in select industries were willing to experiment at first, and it took the parallel emergence of public social programs to shape the kinds of benefits we’re familiar with today. Consider the trajectory of health insurance. Aside from a few union and cooperative experiments in the 1930s, insurance against illness (or wages lost due to illness) was relatively rare. All of this changed in the 1940s, when the combination of a stark labor shortage and inflation-anxious wage controls pressed employers to experiment with non-wage benefits—including job-based health insurance. The federal government encouraged this with the Revenue Act of 1942, exempting employer contributions to their health plans from payroll and income taxes.

Although seen as bearing little consequence at the time, this innovation came to dominate the logic and politics of American health care. In the early postwar years, labor, employers, insurers, and medical interests—each for their own reasons—championed job-based benefits as a source of general security and an alternative to public insurance. Through postwar bargaining, coverage under job-based plans broadened to spouses and dependents, and to a wider range of costs and services. Public programs, meanwhile, confined their attention to those too old, too young, or too categorically disadvantaged to rely on job-based coverage.

The reach of job-based health care plateaued at about seventy percent of the population in the early 1970s. After that, the pretension that employment-based benefits might serve as a surrogate for a national program was punctured by rising costs, declining coverage, and the steady erosion of benefits. The financing of health care was transformed as variations on health maintenance organizations and “managed care” displaced the older fee-for-service model. Employers chafed at the burden of health coverage and sought to redistribute the costs: some looked to political solutions that might spread the burden to their competitors or to other sectors of the economy, and others simply shuffled that burden onto the backs of their workers—or abandoned health care benefits altogether. In health care reform debates, the idea of subsidizing or mandating job-based coverage was gradually displaced by innovations in individual coverage—such as high-deductible plans, or the “individual mandate” embedded in the Affordable Care Act.

Steady losses in employer-sponsored coverage have been partially backfilled by public programs, but the categorical reach of these programs (children, the very poor) varies widely by state. In this sense, the unevenness of private coverage is magnified by uneven public coverage. The same states that have dug in against collective bargaining (and hence the extension of job-based health care) have also sustained the sparest public programs. Here the divide between North and South is especially pronounced. In Minnesota, for example, almost 70 percent are covered by job-based health plans, and adults (without dependent children) qualify for Medicaid when their incomes dip below 200 percent of the federal poverty threshold. In Mississippi, by contrast, barely half (54 percent) claim job-based coverage, and childless adults do not qualify for Medicaid until their incomes dip to a thin fraction (16 percent) of the federal poverty threshold.

As with health care, the private pension system grew sporadically, shaped by an uneasy relationship with public programs. Many larger employers experimented with pensions in the early twentieth century as a means of ensuring worker loyalty, and of easing workers into retirement as their productivity waned. By the early 1930s, about 15 percent of workers were eligible for private pensions. But most of these plans were crudely designed. Service and good behavior requirements meant that only about 5 percent of retirees were actually drawing benefits. And underfunding meant that most plans slid into bankruptcy when the Great Depression hit.

All of this changed in the 1930s and 1940s, when the combination of depression and war recast private and public views of retirement security. Central to the widespread adoption of private pensions in the United States was the fact that the public program—the old age provisions of the Social Security Act—came first. Employers proceeded on the assumption that Social Security would suffice for most workers, while serving as a foundation for more generous private plans aimed at higher earners. In this respect, the public program served as a hidden subsidy for private pensions—and effectively pushed their coverage up the income ladder.

Like health care, private pensions, which served as a way of increasing compensation without running afoul of wage and price controls, were reinvented during the war. They won federal blessing in the Revenue Act of 1942, whose antidiscrimination provisions pressed employers to extend their plans—many of which were little more than tax shelters for management—to most employees. And they became a staple of a staple of postwar bargaining, reaching 40 percent of private sector workers by 1960. Social Security remained meager enough to encourage “supplemental” private plans; those private plans, in turn, acted as a powerful argument against the expansion of public coverage.

Pension coverage grew modestly through the end of the twentieth century, aided—after 1974—by the Employment Retirement Income Security Act (which established accounting and fiduciary standards) and the Pension Benefit Guaranty Corporation (which ensured the payment of benefits under most defined-benefit plans when the sponsoring firms went under). But, while pension coverage has not slipped as precipitously as health coverage over the last generation, retirement security has, like health security, been eroded by the changing form of coverage—as traditional “defined benefit” plans are displaced by riskier “defined contribution” plans.

Job-Based Benefits and American Inequality

As a system of general social provision, the uniquely American reliance on job-based benefits has always fallen short of providing real security. Such benefits have historically flowed to two groups of workers: those already well placed in the labor market (management, high earners), and those with the organizational clout to win health and retirement plans at the bargaining table.

As union density has plummeted, however, coverage of middle- and low-wage workers has fallen steeply. Meanwhile, many workers—including those in low-wage or part-time jobs, in entire sectors in which such benefits were rare, or in firms too small to qualify for group insurance—have never been covered. Job-based health insurance, having never reached more than about seventy percent of the workforce, has now slipped back to about 60 percent. Private pensions grew slowly to reach about half of the labor force, but have stuck there. As the terms of private employment have eroded, so too has the “family wage” security that once flowed from those jobs.

Since 1979, pension and health care coverage has fallen for almost all workers, and the losses are starkest for the most vulnerable [see graphic below]. Black and Hispanic workers (men especially), low-wage workers, and those with only a high-school education began this era with lower rates of coverage, and they have lost coverage at a faster rate than others. The rate of health care coverage declines markedly as you descend the wage ladder, and has fallen most dramatically over the last thirty years for the lowest-wage workers: by 2010, only about a quarter of workers in the bottom quintile were covered by job-based health insurance.

While the reach of private pensions has declined less dramatically, and actually inches up for some women, coverage remains sparse—and is getting sparser—for low-wage workers. The share of workers offered a pension, and the rate at which workers participate, both decline with income. While about seventy percent of upper-income earners have a pension, barely a quarter of lower-income workers claim the same security. Not surprisingly, the income gap in participation rates is even starker.

And even these numbers understate the damage, because the quality and security of that coverage has also collapsed. As pension coverage has slipped, defined-contribution plans—which expose workers to investment risks (the poor performance of invested contributions) and longevity risks (the possibility of running out of money in retirement)—have largely displaced traditional defined-benefit plans. The importance of this shift is profound: by virtually any measure (age, income, race, gender, educational attainment, marital status), 401(k)-style defined-contribution plans provide uneven security, and widen background income inequalities.

This steady erosion of retirement security reflects the declining bargaining power of workers, and the effort of employers to evade the market, regulatory, and actuarial risk of defined-benefit plans. What evolved, in the middle years of twentieth century, as an effort to broaden the reach of private pensions as a complement to Social Security, is now moving in very nearly the opposite direction. In 1983, just under a third of American families were at risk of being unable to sustain their pre-retirement standard of living into retirement; by 2009, that had swollen to over half. The rate of poverty among older households is about nine times greater for those without the income of a defined-benefit plan—a gap that has grown markedly in recent years.

In the case of health care, insecurity and inequality are driven by a combination of declining coverage, declining plan quality, and rising costs. As coverage has slipped, the out-of-pocket costs (premiums, co-payments, deductibles) for enrolled workers have continued to climb at a rate that easily outpaces earnings or inflation. From 1999 to 2013, the average annual premium for single coverage more than doubled (from just about $2,200 to just under $5,900); and the average annual premium for family coverage nearly tripled (from about $5,800 to about $16,400).

This cost crunch has led to declining rates of job-based insurance, as employers retreat from offering coverage and employees hesitate to assume the costs (especially for family plans) even when its offered. When coverage is available, it tends to squeeze wages. Employers’ health costs have risen from less than one-half of one percent of wages in 1948 to nearly ten percent today. The absence of stable health coverage dramatically increases economic insecurity, including income volatility and risk of bankruptcy (accounting for one-half to two-thirds of all personal bankruptcies prior to the recession). The inequality that flows from job-based health coverage is partially—but only partially—addressed by the Affordable Care Act (ACA), whose long-term impact on rates of uninsurance, relationship to job-based plans and other public programs, and ability to rein in costs are still uncertain.

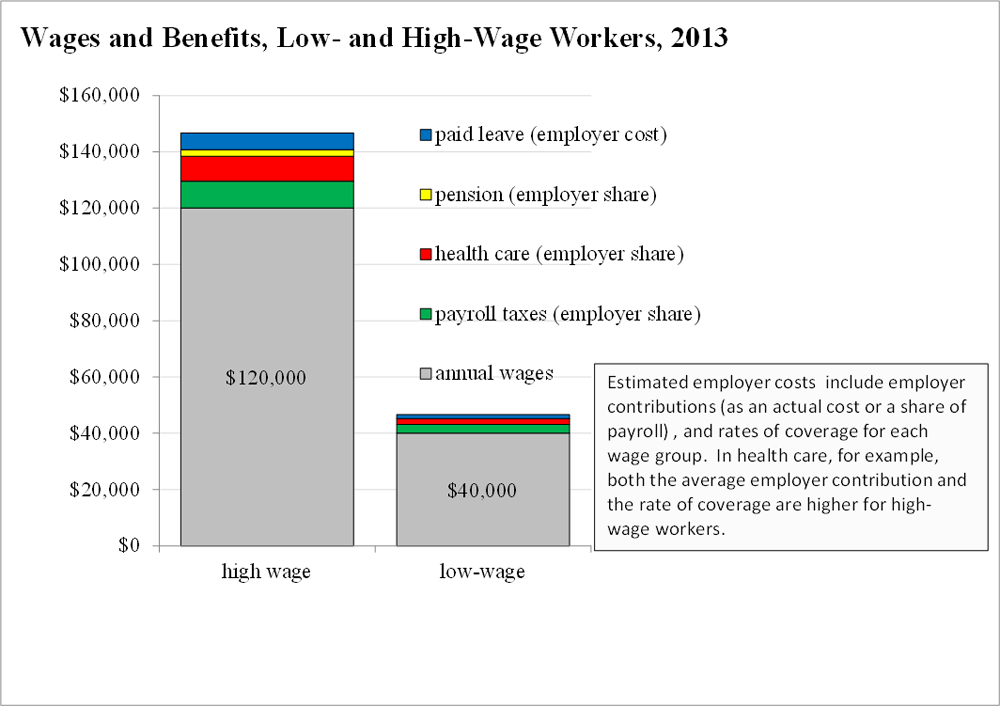

The effect of this reliance on job-based benefits is suggested in the graphic below, which sketches wages and total compensation (wages plus benefits) for workers earning at the 20th percentile (annual wage of about $40,000 in 2013) and at the 80th percentile (about $120,000 in 2013). Each element of non-wage compensation (paid leave, pensions, health care) reflects both the cost to the employer (as a percentage of payroll or as an actual cost), and the likelihood that a worker in that wage cohort has access to the benefit. Since high-wage workers enjoy both more generous benefits and higher rates of coverage, private benefits widen the gap. The low-wage worker claims about $6,500 in non-wage compensation (a bump of about 15 percent on basic wages); the high-wage worker claims over $26,000 (a bump of over 20 percent).

Job-Based Benefits in International Perspective

An international perspective puts these patterns—and the exceptional U.S. trajectory—in sharp relief. The United States notoriously spends more on health and gets less in return than any of its peers. We spend as much in public dollars as most of our OECD peers, and then spend as much again in private dollars—for a per capita cost, a health spending share of GDP, and an out-of-pocket burden that are all roughly double the OECD average. None of this largesse is reflected in health outcomes, on which the United States ranks at or near the bottom on most peer rankings [see graphic below]. A narrower comparison with Canada—a country with similar demographics and political structure—is especially instructive. Since 1960, Canadian health spending has grown more slowly—as a share of the economy or on a per capita basis—while offering universal coverage and higher scores on all key health outcome metrics.

While the diversity of pension systems across the OECD makes direct comparison difficult, it is clear that the uneven coverage and risk of the private pension system in the United States undermines the relative security of the retirement system. Compared to its peers, the American system does relatively little to either pool retirement risk, or to offset that risk with robust public coverage. On the Mercer Global Pension Index, the United States ranks in the middle of the pack of twenty nations, but falls to fifteenth place on adequacy (benefits and benefit design) and fourteenth on integrity (regulation and governance). On the standard OECD measures—the rate at which pension systems replace the incomes of workers, and the accumulation of pension wealth—the United States ranks near the back of the pack [click here for graphic].

International comparison only underscores the dismal historical record of American job-based benefits. In the first generation after World War II, private benefits fell unevenly across the economy, and either discouraged public alternatives or limited their reach. Since then, private coverage has sagged and public programs have picked up only some of the slack. Across this full history, the overblown promise of private coverage pushed public programs to the categorical margins. As a result, working Americans enjoy less security—in international or historical terms—against the risks of retirement or illness. The private welfare state, in this sense, is a little like a private school or a private jet—not just a different way of delivering the goods, but a reliable and deliberate mechanism for sustaining inequality.

Colin Gordon is a professor of history at the University of Iowa. He writes widely on the history of American public policy and is the author, most recently, of Growing Apart: A Political History of American Inequality.